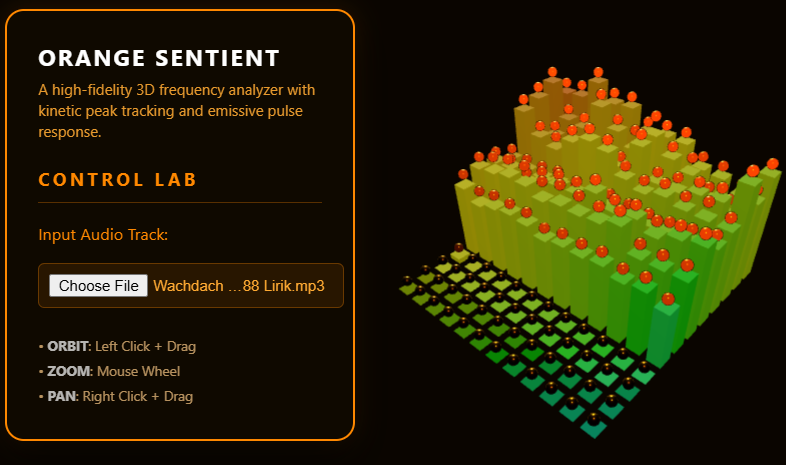

Orange Lab | Next-Gen Audio Visualization Tools

Experience high-fidelity 3D audio visualizers. Transform your music into immersive kinetic art with Orange Lab's sentient tracking technology.

Click here to access.

1. The Core Engine

The logic is driven by the Web Audio API and a custom Kinetic State Machine.

- Fast Fourier Transform (FFT): The engine uses an

AnalyserNodeto perform an FFT on the audio signal. It decomposes the sound into frequency bins (determined byfftSize: 512), which are then mapped to the $12 \times 12$ grid. - Kinetic Tracking: Each "Peak" (the floating spheres) has its own state containing

velocityandcurrentHeight. Unlike simple animations, these objects follow a basic Euler Integration physics model where they are pushed upward by audio energy and pulled downward by a constantGRAVITYvalue. - Integrity Protection: A

MutationObserveracts as a security watchdog, monitoring the DOM for any removal or modification of the attribution footer. If the branding is tampered with, the engine triggers alocation.reload()to protect the developer's IP.

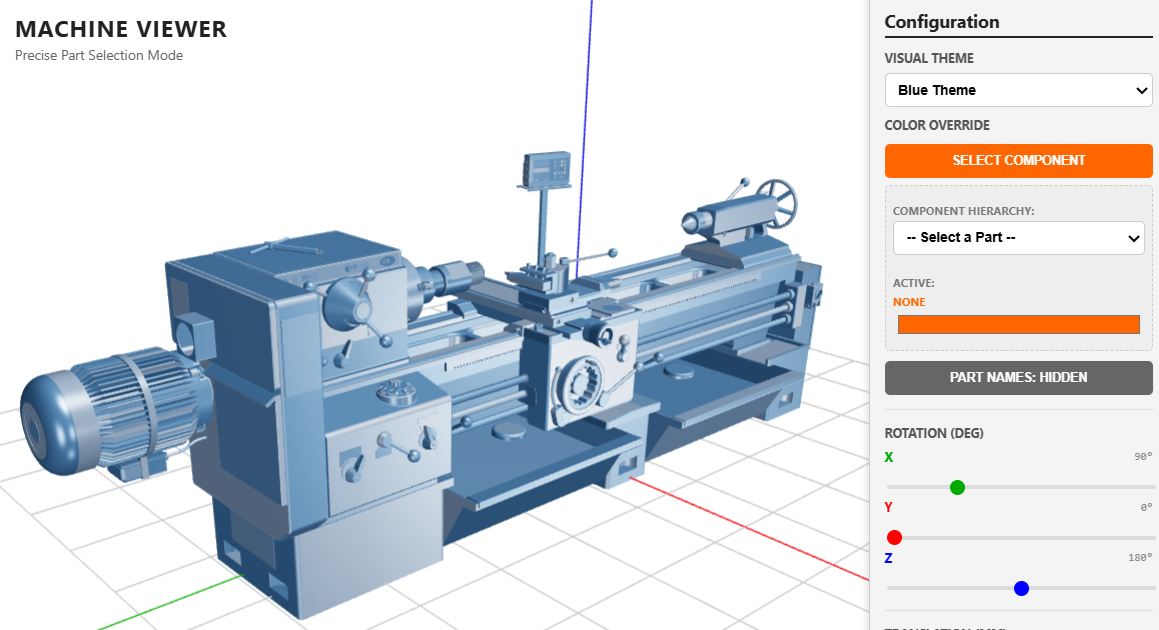

2. Rendering Technology

The visuals are rendered via WebGL using the Three.js library, focusing on high-contrast PBR (Physically Based Rendering).

- PBR Materials: The spheres use

MeshStandardMaterialwithmetalness: 1.0androughness: 0.05to create a chrome-like finish that reflects the surrounding light. - Dynamic Emission: The

emissiveIntensityof the materials is mapped directly to the audio volume, causing the grid to "pulse" and glow in sync with the beats. - Backface Outlining: The spheres use a specialized rendering trick where a slightly larger mesh is rendered with

side: THREE.BackSide. This creates a crisp, black "cel-shaded" outline that helps the orange shapes pop against the dark background. - Performance Optimization: The bars use

barGeo.translate(0, 0.5, 0). By moving the geometry's origin to the base, the engine can simply scale theYaxis instead of recalculating positions, significantly reducing CPU overhead.

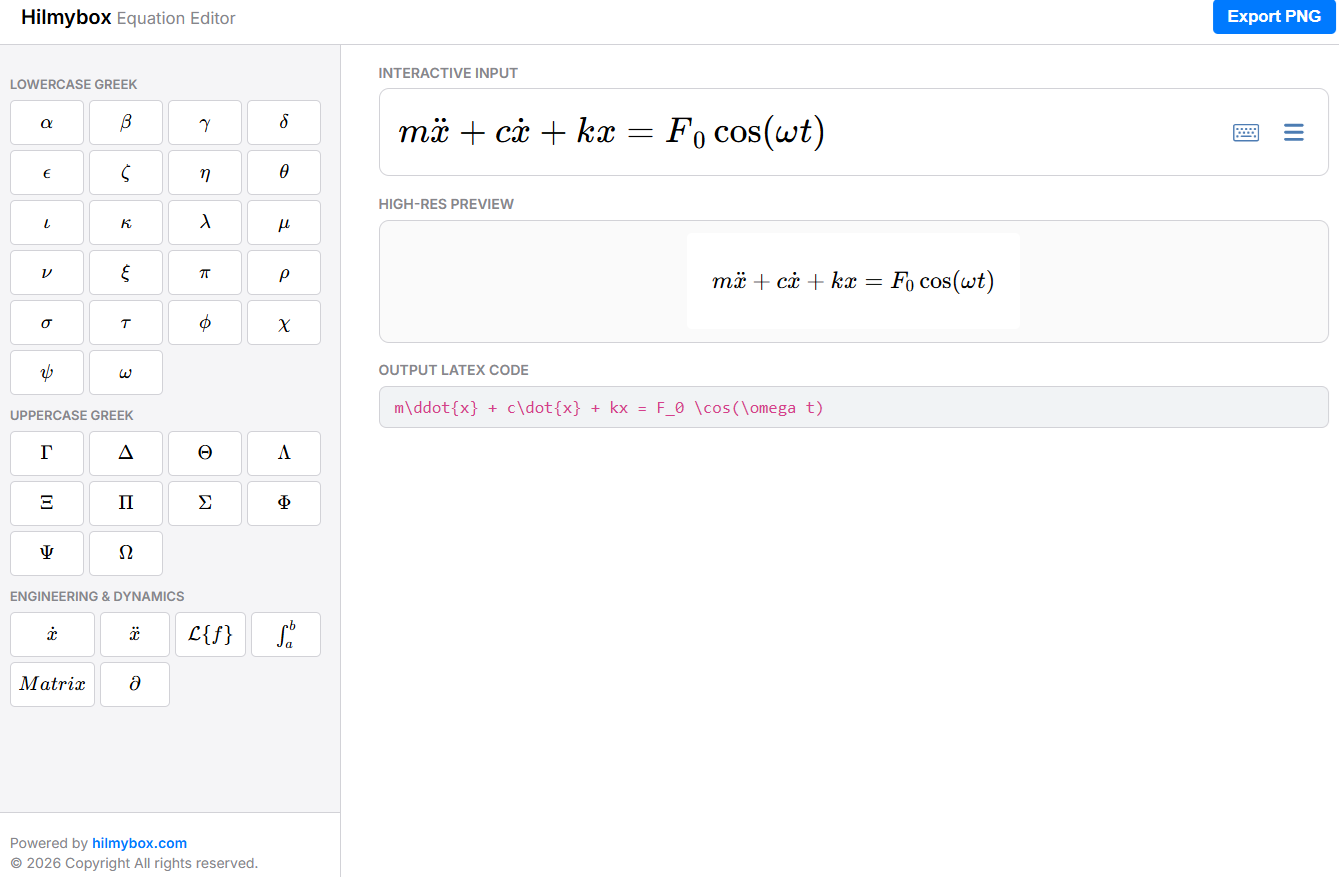

3. Mathematical Tools

The precision of the visualizer relies on Signal Processing and Coordinate Geometry.

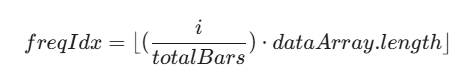

- Frequency Mapping: Since the audio data is a linear array and the visualizer is a 2D grid, the code uses a normalized index mapping:

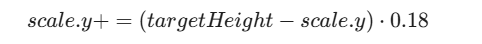

- Smoothing (Lerping): To prevent jarring visual jitter, the bars use Linear Interpolation for their height transitions:

- Spherical to Cartesian Mapping: The

OrbitControlsuse spherical coordinate math to allow the user to orbit the scene, while thecontrols.target.set(-4, 0, 0)shift creates an asymmetric, cinematic composition by moving the mathematical center of the world.

4. Front-End Environment

The environment is a Modern Web Application built with a modular architecture.

- Import Maps: The project uses an

importmapto manage dependencies. This allows the browser to resolvethreeand itsaddonsdirectly from CDNs (Content Delivery Networks) using standard ES6importsyntax without needing a build tool like Vite or Webpack. - Glassmorphism UI: The sidebar utilizes

backdrop-filter: blur(20px)and semi-transparent RGBA backgrounds to create a modern, high-tech interface overlay that remains readable over the moving 3D background. - Asynchronous I/O: The file upload system utilizes

FileReaderanddecodeAudioData(Promises) to process audio files in a background thread, preventing the UI from freezing during heavy data decoding.